Capturing Braided Hairstyles

- Liwen Hu1

- Chongyang Ma1

- Linjie Luo2

- Li-Yi Wei3

- Hao Li1

- 1University of Southern California

- 2Adobe Research

- 3The University of Hong Kong

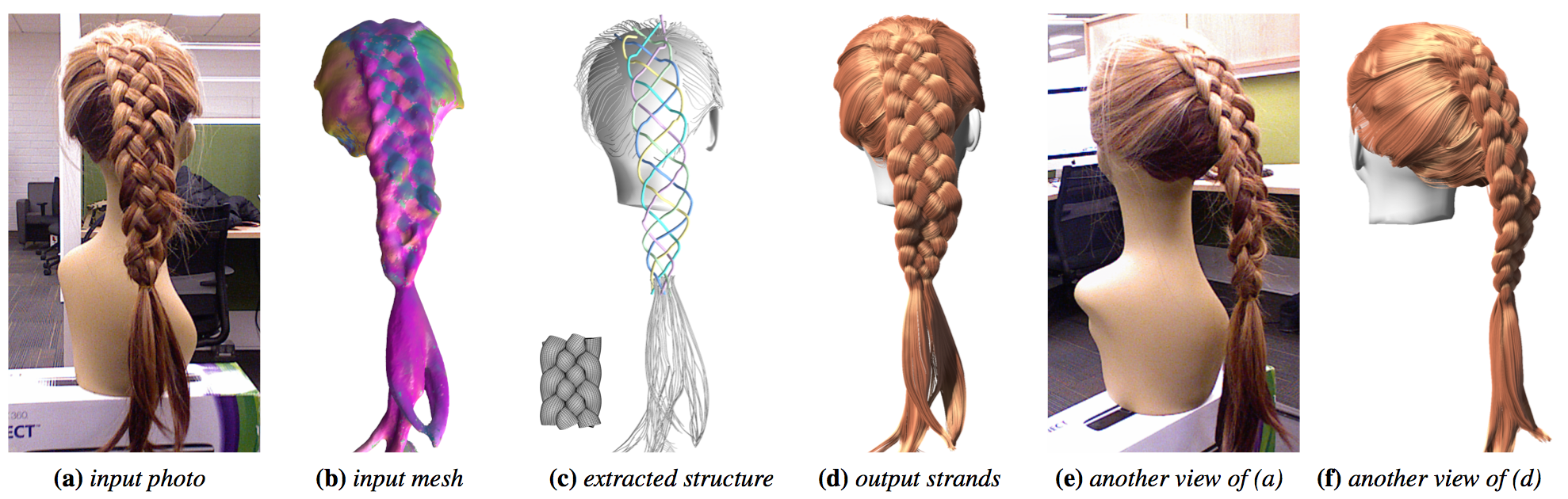

We capture the braided hairstyle (a) using a Kinect sensor and obtain an input mesh with a local 3D orientation for each vertex (b). Based on the information provided by the example patches in a database, we extract the centerlines (c) of the braid structure to synthesize the output strands (d).

Abstract

From fishtail to princess braids, these intricately woven structures define an important and popular class of hairstyle, frequently used for digital characters in computer graphics. In addition to the challenges created by the infinite range of styles, existing modeling and capture techniques are particularly constrained by the geometric and topological complexities. We propose a data-driven method to automatically reconstruct braided hairstyles from input data obtained from a single consumer RGB-D camera. Our approach covers the large variation of repetitive braid structures using a family of compact procedural braid models. From these models, we produce a database of braid patches and use a robust random sampling approach for data fitting. We then recover the input braid structures using a multi-label optimization algorithm and synthesize the intertwining hair strands of the braids. We demonstrate that a minimal capture equipment is sufficient to effectively capture a wide range of complex braids with distinct shapes and structures.

Keywords

Hair capture, braids, data-driven reconstruction

Paper