Robust Hair Capture Using Simulated Examples

- Liwen Hu1

- Chongyang Ma1

- Linjie Luo2

- Hao Li1

- 1University of Southern California

- 2Adobe Research

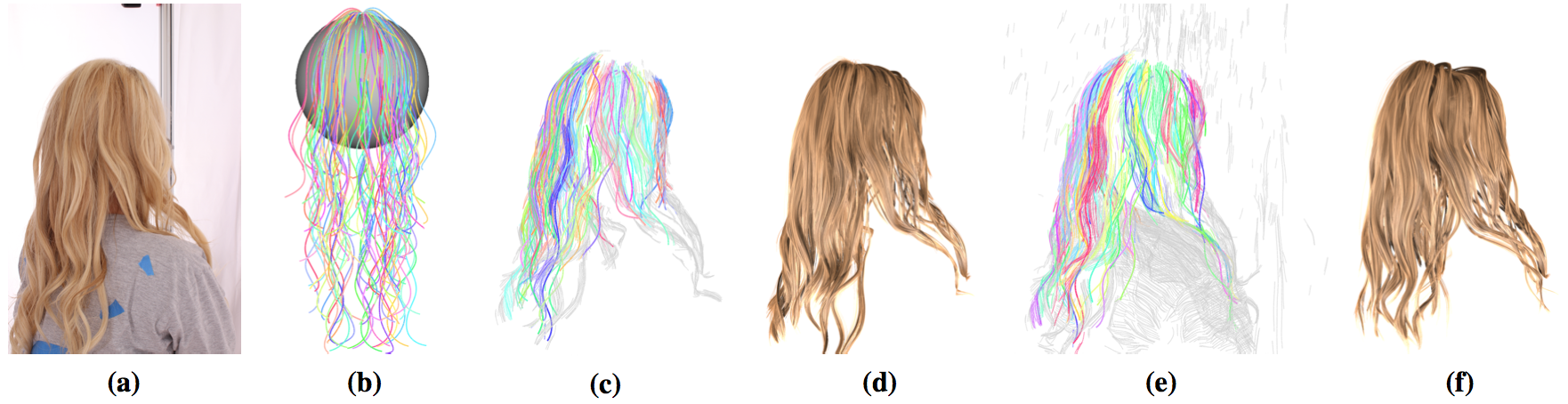

Our system takes as input a few images (a) and employs a database of simulated example strands (b) to discover structurally plausible configurations from the reconstructed cover strands (c) for final strand synthesis (d). Our method robustly fits example strands to the cover strands which are computed from unprocessed outlier affected input data (e) to generate compelling reconstruction results (f).

Abstract

We introduce a data-driven hair capture framework based on example strands generated through hair simulation. Our method can robustly reconstruct faithful 3D hair models from unprocessed input point clouds with large amounts of outliers. Current state-of-the-art techniques use geometrically-inspired heuristics to derive global hair strand structures, which can yield implausible hair strands for hairstyles involving large occlusions, multiple layers, or wisps of varying lengths. We address this problem using a voting-based fitting algorithm to discover structurally plausible configurations among the locally grown hair segments from a database of simulated examples. To generate these examples, we exhaustively sample the simulation configurations within the feasible parameter space constrained by the current input hairstyle. The number of necessary simulations can be further reduced by leveraging symmetry and constrained initial conditions. The final hairstyle can then be structurally represented by a limited number of examples. To handle constrained hairstyles such as a ponytail of which realistic simulations are more difficult, we allow the user to sketch a few strokes to generate strand examples through an intuitive interface. Our approach focuses on robustness and generality. Since our method is structurally plausible by construction, we ensure an improved control during hair digitization and avoid implausible hair synthesis for a wide range of hairstyles.

Keywords

Hair capture, 3D reconstruction, data-driven modeling

Paper