Analogy-Driven 3D Style Transfer

- Chongyang Ma1,3

- Haibin Huang2

- Alla Sheffer1

- Evangelos Kalogerakis2

- Rui Wang2

- 1University of British Columbia

- 2University of Massachusetts Amherst

- 3University of Southern California

Abstract

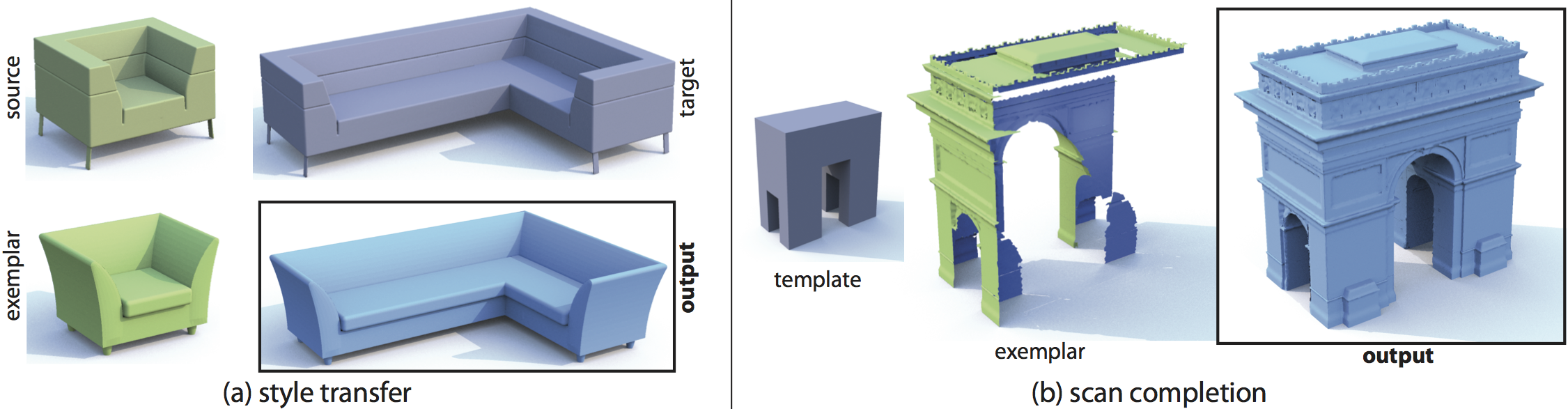

Style transfer aims to apply the style of an exemplar model to a target one, while retaining the target's structure. The main challenge in this process is to algorithmically distinguish style from structure, a high-level, potentially ill-posed cognitive task. We recast style transfer in terms of shape analogies. In IQ testing, shape analogy queries present the subject with three shapes: source, target and exemplar, and ask them to select an output such that the transformation, or analogy, from the exemplar to the output is similar to that from the source to the target. We observe that the typical analogies we look for consist of a small set of simple transformations, which when applied to the exemplar generate a continuous, seamless output model. To assemble a shape analogy, we compute an optimal set of source-to-target transformations, such that the assembled analogy best fits these criteria. The assembled analogy is then applied to the exemplar shape to produce the desired output model. We use the proposed framework to seamlessly transfer a variety of style properties between 2D and 3D objects and demonstrate significant improvements over state of the art in style transfer. We further show that our framework can be used to successfully complete partial scans with the help of a user provided structural template, coherently propagating scan style across the completed surfaces.

Paper

Links

Copyright notice

The definitive version is available at http://diglib.eg.org/ and http://onlinelibrary.wiley.com/ .