Facial Performance Sensing Head-Mounted Display

- Hao Li1

- Laura Trutoiu2

- Kyle Olszewski1

- Lingyu Wei1

- Tristan Trutna2

- Pei-Lun Hsieh1

- Aaron Nicholls2

- Chongyang Ma1

- 1University of Southern California

- 2Oculus & Facebook

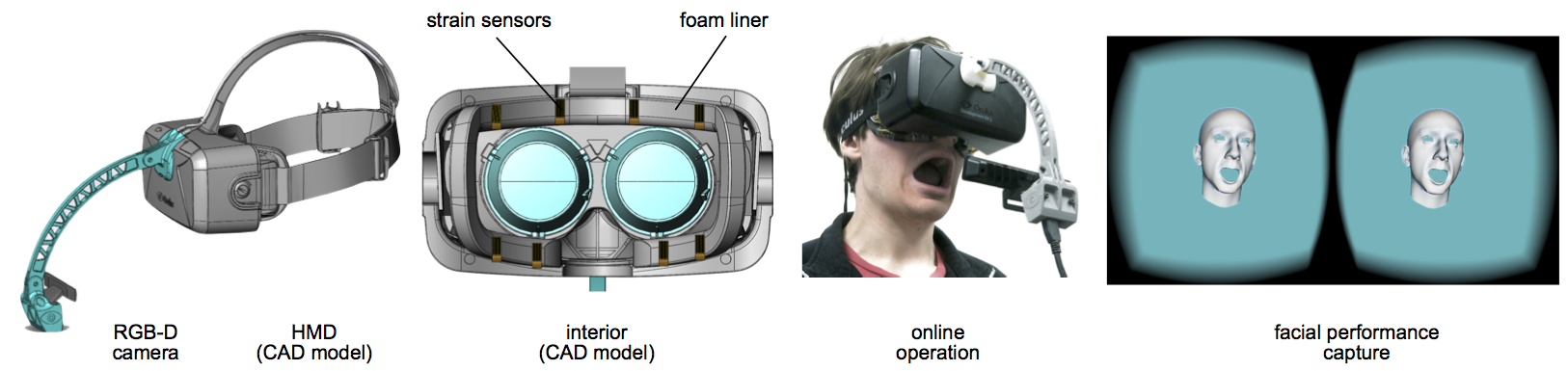

To enable immersive face-to-face communication in virtual worlds, the facial expressions of a user have to be captured while wearing a virtual reality head-mounted display. Because the face is largely occluded by typical wearable displays, we have designed an HMD that combines ultra-thin strain sensors with a head-mounted RGB-D camera for real-time facial performance capture and animation.

Abstract

There are currently no solutions for enabling direct face-to-face interaction between virtual reality (VR) users wearing head-mounted displays (HMDs). The main challenge is that the headset obstructs a significant portion of a user's face, preventing effective facial capture with traditional techniques. To advance virtual reality as a next-generation communication platform, we develop a novel HMD that enables 3D facial performance-driven animation in real-time. Our wearable system uses ultra-thin flexible electronic materials that are mounted on the foam liner of the headset to measure surface strain signals corresponding to upper face expressions. These strain signals are combined with a head-mounted RGB-D camera to enhance the tracking in the mouth region and to account for inaccurate HMD placement. To map the input signals to a 3D face model, we perform a single-instance offline training session for each person. For reusable and accurate online operation, we propose a short calibration step to readjust the Gaussian mixture distribution of the mapping before each use. The resulting animations are visually on par with cutting-edge depth sensor-driven facial performance capture systems and hence, are suitable for social interactions in virtual worlds.

Keywords

Real-time facial performance capture, virtual reality, depth camera, strain gauge, head-mounted display, wearable sensors

Paper

Links

Media

Featured in MIT Tech. Review, Phys.org, Engadget, Wired, Ars Technica, Vice, and fxguide.